Activation Functions

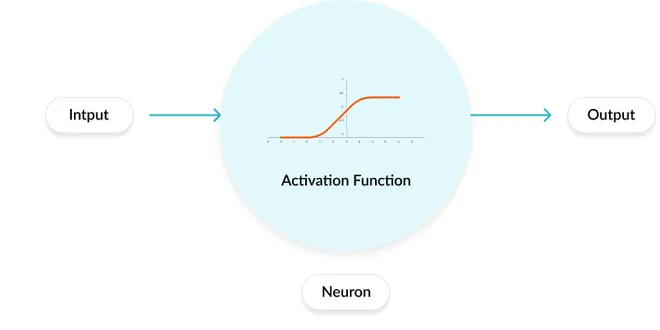

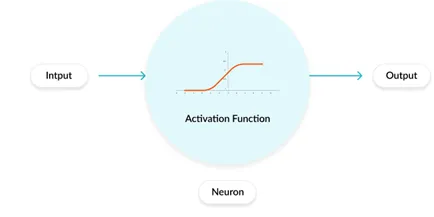

Activation functions are crucial components in neural networks that determine the output of each neuron based on its input. They introduce non-linearity into the model, allowing the network to learn complex patterns and relationships within the data. Without activation functions, a neural network would behave like a linear model, limiting its ability to solve intricate problems. Various types of activation functions exist, including linear, sigmoid, and ReLU, each with unique properties and applications. Understanding these functions is essential for designing effective neural network architectures and improving their performance in tasks such as classification and regression.

ACTIVATION FUNCTIONS

Activation functions are the equations that determine the output of a neural network. The main purpose of an activation function is to introduce non-linearity to the neural network.

📚 Read more at Analytics Vidhya🔎 Find similar documents

Activation Functions (Part 1)

An activation is a function applied to the output of a neuron that allows it to learn more complex functions as we go deeper in a neural network. They can also be thought of as mapping to modify the…

📚 Read more at Analytics Vidhya🔎 Find similar documents

What is activation function ?

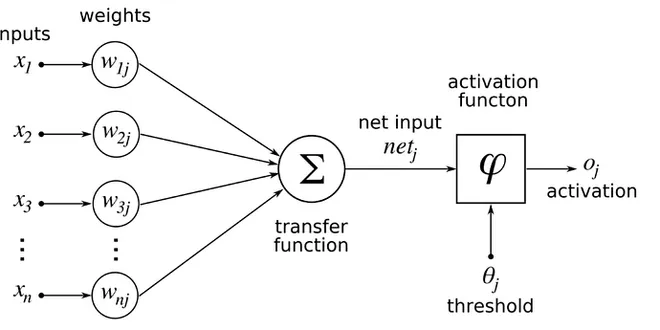

The activation function defines the output of a neuron / node given an input or set of input (output of multiple neurons). It’s the mimic of the stimulation of a biological neuron. The output of the…

📚 Read more at Towards Data Science🔎 Find similar documents

Activation Functions — All You Need To Know!

An activation function is a function that is added to an artificial neural network in order to help the network learn complex patterns in the data. When comparing with a neuron-based model that is in…...

📚 Read more at Analytics Vidhya🔎 Find similar documents

Activation Function

Activation Function in Deep Learning helps to determine the output of the neural network. Also helps to normalize the output of each neuron. Neural networks use non-linear activation functions, which…...

📚 Read more at Analytics Vidhya🔎 Find similar documents

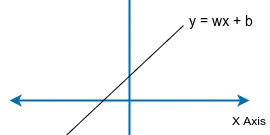

Why Activation Functions?

So the activation function basically provides a non-linearity to z, which helps in learning complex functions. If we remove all the activation functions, our network will only be learning linear…

📚 Read more at Analytics Vidhya🔎 Find similar documents

Activation Functions

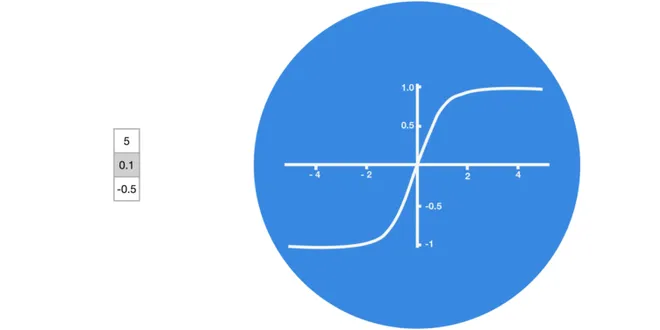

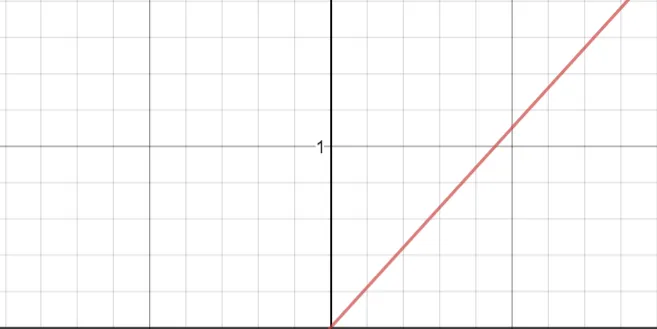

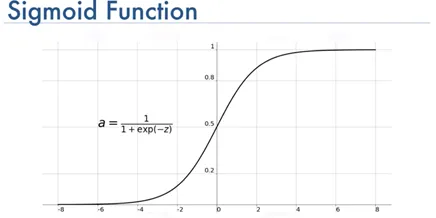

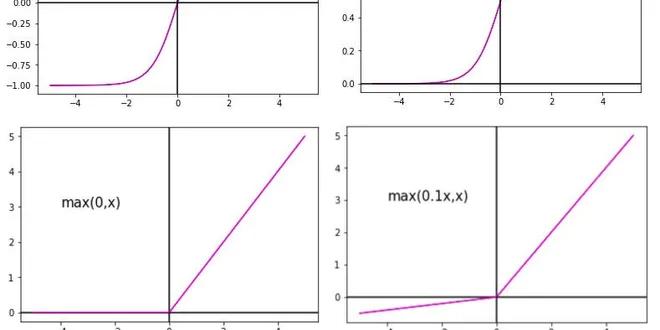

Activation Functions Linear ELU ReLU LeakyReLU Sigmoid Tanh Softmax Linear A straight line function where activation is proportional to input ( which is the weighted sum from neuron ). Function Deriva...

📚 Read more at Machine Learning Glossary🔎 Find similar documents

Types of Activation Functions in Neural Network

The activation function is usually an abstraction representing the rate of action potential firing in the cell. In its simplest form, this function is binary — that is, either the neuron is firing or…...

📚 Read more at Analytics Vidhya🔎 Find similar documents

Activation Functions in Neural Networks

Activation functions are mathematical equations that determine the output of a neural network. The function is attached to each neuron in the network after it calculates a “weighted sum(Wi)” of its…

📚 Read more at Analytics Vidhya🔎 Find similar documents

Unraveling Activation Functions for Finance: A Practitioner’s Guide

What is an Activation Function? In machine learning, an activation function is a mathematical operation that determines the output of a neural network based on the inputs it receives. It is used in th...

📚 Read more at Python in Plain English🔎 Find similar documents

Classical Neural Net: Why/Which Activations Functions ?

Activation functions are a family of functions that holds the purpose of introducing non-linearity after a layer computation. Indeed without an activation function no matter how much augmentation or…

📚 Read more at Towards Data Science🔎 Find similar documents

Activation Functions, Optimization Techniques, and Loss Functions

A significant piece of a neural system Activation function is numerical conditions that decide the yield of a neural system. The capacity is joined to every neuron in the system and decides if it…

📚 Read more at Analytics Vidhya🔎 Find similar documents