ML Fairness Metrics&Auditing

ML fairness metrics and auditing are essential components in the development of machine learning models, ensuring that these systems operate without bias and promote equitable outcomes. Fairness metrics help evaluate how well a model performs across different demographic groups, focusing on criteria such as demographic parity, equal opportunity, and equal accuracy. Auditing involves systematically assessing models to identify and mitigate potential biases, ensuring compliance with ethical standards. By implementing these practices, organizations can enhance transparency, build trust, and foster responsible AI usage, ultimately leading to more inclusive and fair decision-making processes in various applications.

Fairness Metrics Won’t Save You from Stereotyping

Fairness metrics are often used to verify that machine learning models do not produce unfair outcomes across racial/ethnic groups, gender categories, or other protected classes. Here, I will…

📚 Read more at Towards Data Science🔎 Find similar documents

☝️⚖️ ML Fairness is Everybody’s Problem

📝 Editorial We typically associate fairness issues in machine learning (ML) models with large consumer tech startups like Facebook, Apple and Twitter. It seems easy enough to point the finger at bias...

📚 Read more at TheSequence🔎 Find similar documents

AI Fairness

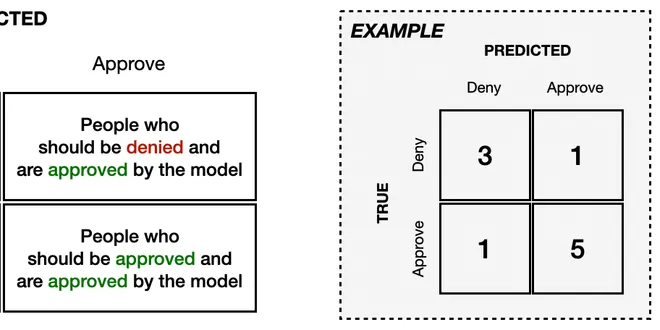

Introduction There are many different ways of defining what we might look for in a fair machine learning (ML) model. For instance, say we're working with a model that approves (or denies) credit card...

📚 Read more at Kaggle Learn Courses🔎 Find similar documents

AI Fairness

Introduction There are many different ways of defining what we might look for in a fair machine learning (ML) model. For instance, say we're working with a model that approves (or denies) credit card...

📚 Read more at Kaggle Learn Courses🔎 Find similar documents

Fairness in Machine Learning (Part 1)

Contents Fainess in Machine Learning Evidence of the problem Fundamental concept: Discrimination, Bias, and Fairness 1. Fairness in Machine Learning Machine learning algorithms substantially affect ev...

📚 Read more at Towards AI🔎 Find similar documents

How to define fairness to detect and prevent discriminatory outcomes in Machine Learning

This can be achieved is by defining a metric that describes the notion of fairness in our model. For example, when looking at university admissions, we can compare admission rates of men and women…

📚 Read more at Towards Data Science🔎 Find similar documents

[5 min read] Metrics to measure the performance of your classification ML models

Whilst there are many metrics available to evaluate a Classification ML model, in this post, I am going to focus on the ones I have seen being used most frequently.

📚 Read more at Analytics Vidhya🔎 Find similar documents