Random Projection

Random Projection is a dimensionality reduction technique that efficiently transforms high-dimensional data into a lower-dimensional space while preserving the pairwise distances between data points. This method leverages random matrices, such as Gaussian or sparse matrices, to achieve a balance between computational efficiency and accuracy. The Johnson-Lindenstrauss lemma underpins this approach, ensuring that the distances between points remain approximately intact during the projection. Random Projection is particularly useful in scenarios where large datasets need to be processed quickly, making it a valuable tool in fields like machine learning and data analysis.

6.6. Random Projection

The sklearn.random_projection module implements a simple and computationally efficient way to reduce the dimensionality of the data by trading a controlled amount of accuracy (as additional varianc......

📚 Read more at Scikit-learn User Guide🔎 Find similar documents

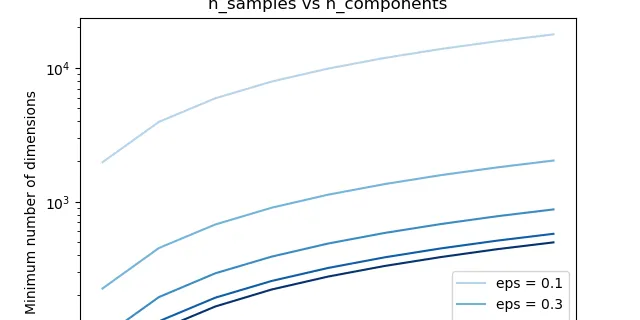

The Johnson-Lindenstrauss bound for embedding with random projections

The Johnson-Lindenstrauss bound for embedding with random projections The Johnson-Lindenstrauss lemma states that any high dimensional dataset can be randomly projected into a lower dimensional Euclid...

📚 Read more at Scikit-learn Examples🔎 Find similar documents