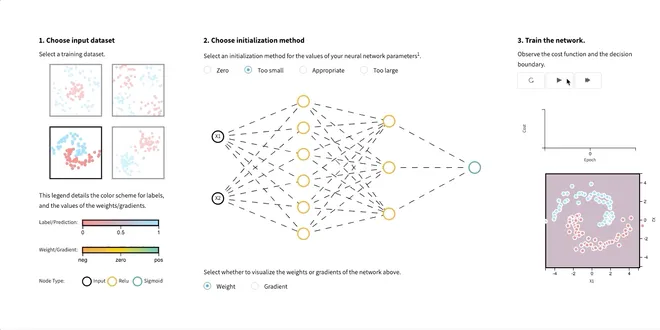

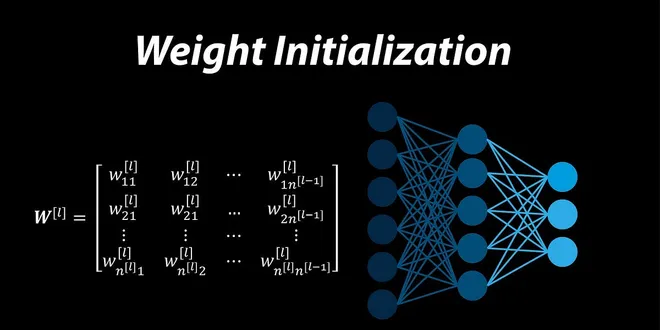

Weight Initialization

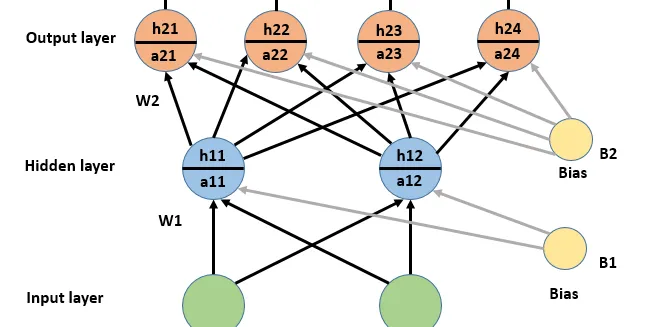

Weight initialization is a crucial step in the design of neural networks, impacting their training and performance. It involves setting the initial values of the model’s parameters, known as weights, before the training process begins. Proper weight initialization helps to ensure that the optimization algorithm, typically stochastic gradient descent, can effectively minimize the loss function. Various techniques have been developed over time, such as Xavier and He initialization, which take into account the activation functions used in the network. These tailored approaches can significantly enhance the convergence speed and overall effectiveness of deep learning models.

Weight Initialization for Deep Learning Neural Networks

Last Updated on February 8, 2021 Weight initialization is an important design choice when developing deep learning neural network models. Historically, weight initialization involved using small rando...

📚 Read more at Machine Learning Mastery🔎 Find similar documents

Weights Initialization in Neural Network

Weight initialization helps a lot in optimization for deep learning. Without it, SGD and its variants would be much slower and tricky to converge to the optimal weights. The aim of weight…

📚 Read more at Analytics Vidhya🔎 Find similar documents

Deep Learning Weight Initialization Techniques

Photo by Jakob Boman on Unsplash Introduction A neural network is a constellation of neurons arranged in layers. Each layer is a mathematical transformation that can be linear, non-linear, or a combin...

📚 Read more at Towards AI🔎 Find similar documents

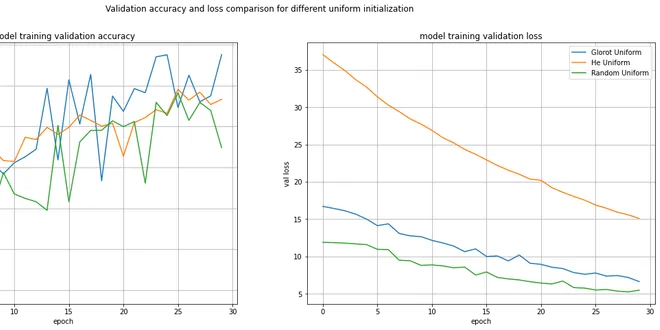

Weight Initialization for Neural Networks — Does it matter?

Weight initialization techniques changes the behavior of the artificial neural network model over the course of its training. Hence we need to understand how the choice of weight(kernel) initializatio...

📚 Read more at Towards Data Science🔎 Find similar documents

Weight Initialization for Neural Networks

When training a neural network, one of the important design choices is how we initialize the weights. Before any learning even begins… Continue reading on Towards AI

📚 Read more at Towards AI🔎 Find similar documents

Why better weight initialization is important in neural networks?

At the beginning of my deep learning journey, I always underrated weight initialization. I believed weights should be initialized to random values without knowing answers to the questions like why…

📚 Read more at Towards Data Science🔎 Find similar documents

Selecting the right weight initialization for your deep neural network

The weight initialization technique you choose for your neural network can determine how quickly the network converges or whether it converges at all. Although the initial values of these weights are…...

📚 Read more at Towards Data Science🔎 Find similar documents

Understand Kaiming Initialization and Implementation Detail in PyTorch

Initialization is a process to create weight. In the below code snippet, we create a weight w1 randomly with the size of(784, 50). You may wonder why need we care about initialization if the weight…

📚 Read more at Towards Data Science🔎 Find similar documents

Parameter Initialization

Now that we know how to access the parameters, let’s look at how to initialize them properly. We discussed the need for proper initialization in Section 5.4 . The deep learning framework provides defa...

📚 Read more at Dive intro Deep Learning Book🔎 Find similar documents

Initializing Weights for Deep Learning Models

Last Updated on April 8, 2023 In order to build a classifier that accurately classifies the data samples and performs well on test data, you need to initialize the weights in a way that the model conv...

📚 Read more at MachineLearningMastery.com🔎 Find similar documents

Weight Initialization in Deep Neural Networks

Weight and bias are the adjustable parameters of a neural network, and during the training phase, they are changed using the gradient descent algorithm to minimize the cost function of the network…

📚 Read more at Towards Data Science🔎 Find similar documents

Weight Initialization and Activation Functions in Deep Learning

Developing effective deep learning models requires fine-tuning. Take the time to select the correct activation function and weight initialization method.

📚 Read more at Towards Data Science🔎 Find similar documents