Gated-recurrent-unit

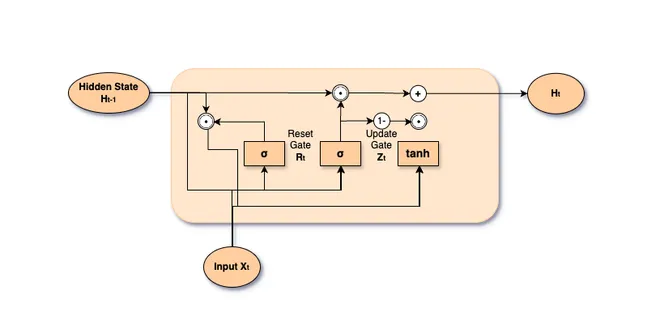

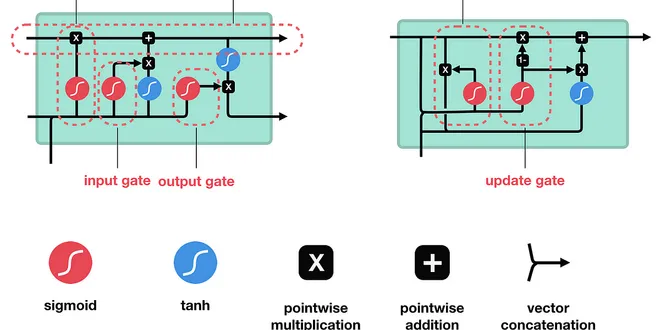

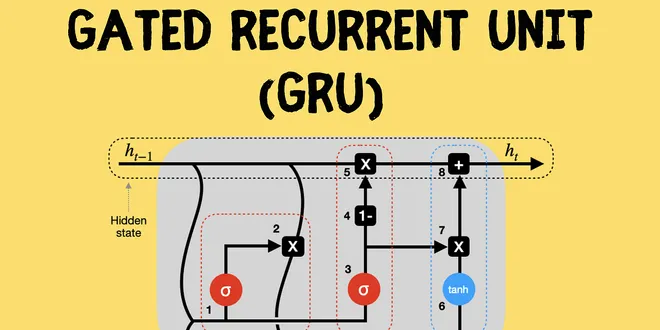

Gated Recurrent Units (GRUs) are a type of recurrent neural network (RNN) architecture designed to efficiently process sequential data. Introduced in 2014, GRUs simplify the structure of Long Short-Term Memory (LSTM) networks while retaining their core functionality. They utilize two primary gates: the reset gate and the update gate, which help manage the flow of information and mitigate issues like the vanishing gradient problem. This makes GRUs particularly effective for tasks involving long-term dependencies, such as natural language processing and time-series analysis, offering a balance between performance and computational efficiency.

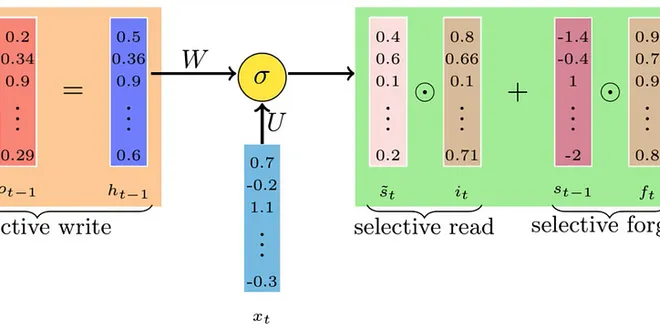

The Math Behind Gated Recurrent Units

Gated Recurrent Units (GRUs) are a powerful type of recurrent neural network (RNN) designed to handle sequential data efficiently. In this article, we’ll explore what GRUs are, and…

📚 Read more at Towards Data Science🔎 Find similar documents

Gated Recurrent Units (GRU)

As RNNs and particularly the LSTM architecture ( Section 10.1 ) rapidly gained popularity during the 2010s, a number of papers began to experiment with simplified architectures in hopes of retaining t...

📚 Read more at Dive intro Deep Learning Book🔎 Find similar documents

Gated Recurrent Units (GRU) — Improving RNNs

In this article, I will explore a standard implementation of recurrent neural networks (RNNs): gated recurrent units (GRUs). GRUs were introduced in 2014 by Kyunghyun Cho et al. and are an improvement...

📚 Read more at Towards Data Science🔎 Find similar documents

Unlocking Sequential Intelligence: The Power and Efficiency of Gated Recurrent Units in Deep…

Unlocking Sequential Intelligence: The Power and Efficiency of Gated Recurrent Units in Deep Learning Abstract Context: Gated Recurrent Units (GRU) has emerged as a formidable architecture within the...

📚 Read more at Python in Plain English🔎 Find similar documents

Deep Dive into Gated Recurrent Units (GRU): Understanding the Math behind RNNs

Gated Recurrent Unit (GRU) is a simplified version of Long Short-Term Memory (LSTM). Let’s see how it works in this article. Photo by Laila Gebhard on Unsplash This article will explain the working o...

📚 Read more at Towards AI🔎 Find similar documents

Long Short Term Memory and Gated Recurrent Unit’s Explained — ELI5 Way

Hi All, welcome to my blog “Long Short Term Memory and Gated Recurrent Unit’s Explained — ELI5 Way” this is my last blog of the year 2019. My name is Niranjan Kumar and I’m a Senior Consultant Data…

📚 Read more at Towards Data Science🔎 Find similar documents

Recurrent Neural Networks — Part 4

In this blog post, we introduce the concept of gated recurrent units. Having fewer parameters than the LSTM, yet still empirically yield similar performance.

📚 Read more at Towards Data Science🔎 Find similar documents

Understanding Gated Recurrent Neural Networks

I strongly recommend to first know how a Recurrent Neural Network algorithm works to get along with this post of Gated RNN’s. Before getting into the details, let us first discuss about the need to…

📚 Read more at Analytics Vidhya🔎 Find similar documents

GRU Recurrent Neural Networks — A Smart Way to Predict Sequences in Python

A visual explanation of Gated Recurrent Units including an end to end Python example of their use with real-life data Continue reading on Towards Data Science

📚 Read more at Towards Data Science🔎 Find similar documents

A deep dive into the world of gated Recurrent Neural Networks: LSTM and GRU

RNNs can further be improved using the gated RNN architecture. LSTM and GRU are some examples of this. The articles explain both the architectures in detail.

📚 Read more at Analytics Vidhya🔎 Find similar documents

GRU

Applies a multi-layer gated recurrent unit (GRU) RNN to an input sequence. For each element in the input sequence, each layer computes the following function: where h t h_t h t is the hidden state a...

📚 Read more at PyTorch documentation🔎 Find similar documents

[NIPS 2017/Part 1] Gated Recurrent Convolution NN for OCR with Interactive Code [ Manual Back Prop…

So this is the first part of implementing Gated Recurrent Convolutional Neural Network. And I will cover one by one, so for today lets implement a simple Recurrent Convolutional Neural Network as a…

📚 Read more at Towards Data Science🔎 Find similar documents